Neural Illumination:

Lighting Prediction for Indoor Environments

Abstract

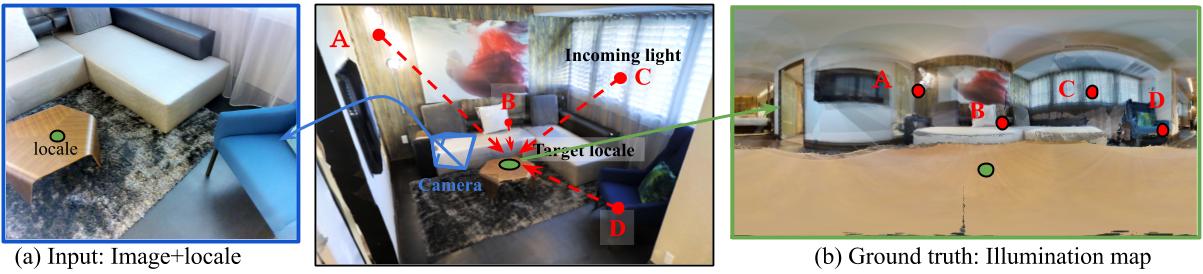

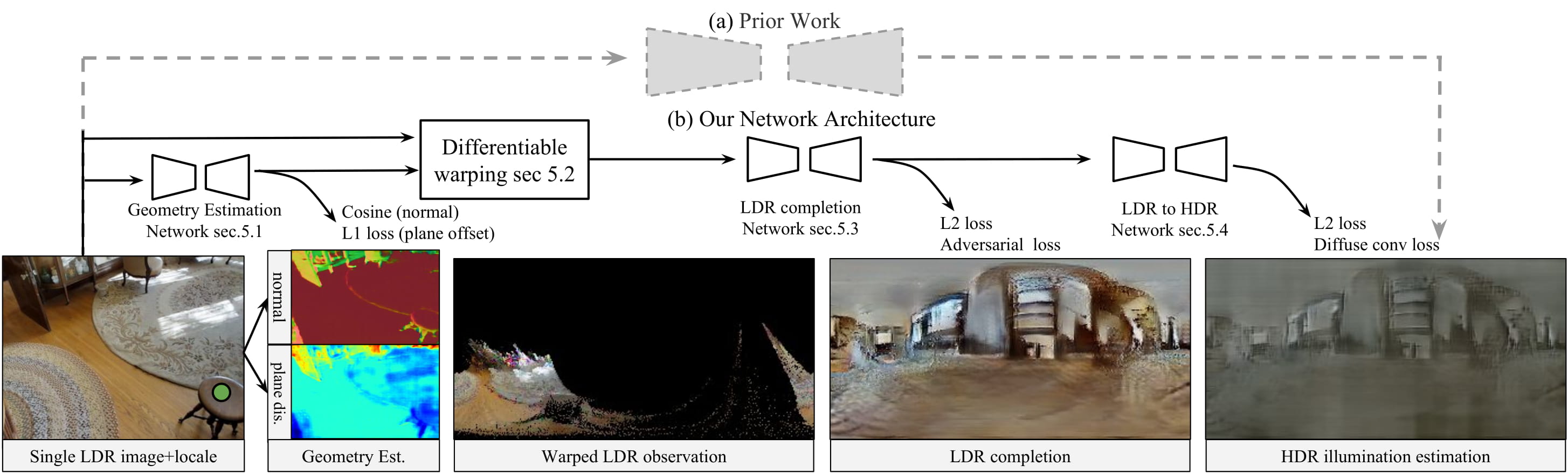

This paper addresses the task of estimating the light arriving from all directions to a 3D point observed at a selected pixel in an RGB image. This task is challenging because it requires predicting a mapping from a partial scene observation by a camera to a complete illumination map for a selected position, which depends on the 3D location of the selection, the distribution of unobserved light sources, the occlusions caused by scene geometry, etc. Previous methods attempt to learn this complex mapping directly using a single black-box neural network, which often fails to estimate high-frequency lighting details for scenes with complicated 3D geometry. Instead, we propose ``Neural Illumination'' a new approach that decomposes illumination prediction into several simpler differentiable sub-tasks: 1) geometry estimation, 2) scene completion, and 3) LDR-to-HDR estimation. The advantage of this approach is that the sub-tasks are relatively easy to learn and can be trained with direct supervision, while the whole pipeline is fully differentiable and can be fine-tuned with end-to-end supervision. Experiments show that our approach performs significantly better quantitatively and qualitatively than prior work.

Paper

-

Shuran Song and Thomas Funkhouser

Neural Illumination: Lighting Prediction for Indoor Environments (CVPR 2019 )

[paper] [bibtex] [oral talk] [poster]

Code and Dataset

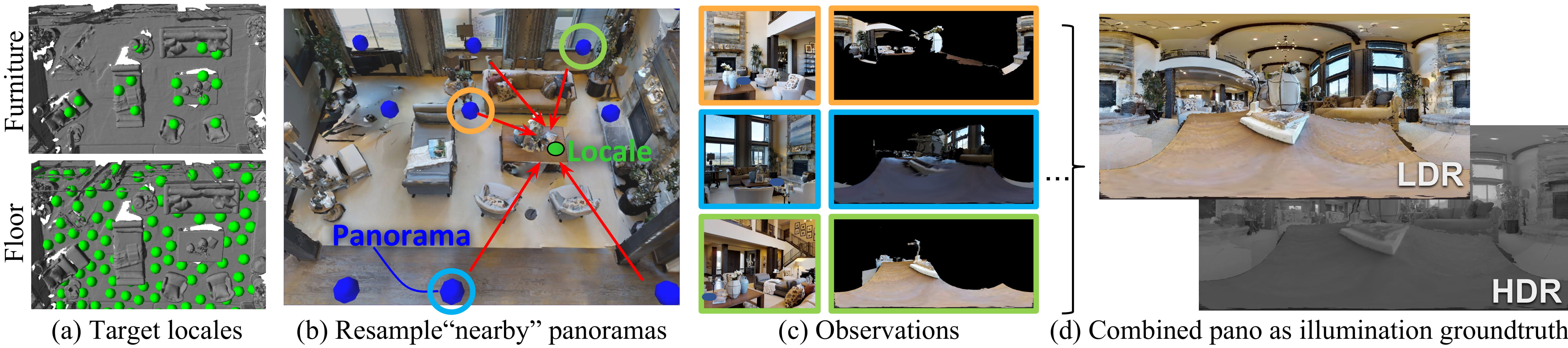

- We generate the illumination data from the Matterport3D dataset. Please send an email to the dataset orgnizer(s) to confirm your agreement and cc Shuran Song . You will get download links for the data from the dataset once you get approval.